HTTP is the underlying mechanism that runs the web. It is the language spoken by browsers and web servers to communicate, download webpage elements and upload user data. The version we currently use is 1.1, a specification that is now almost 15 years old.

And while version 1.1 has served us well, HTTP version 2 is coming has come. The Internet Engineering Task Force are currently formalizing the standard and plan to submit a final protocol proposal to the Internet Engineering Steering Group in November of this year. This is the formal route that an internet standard takes on its path from working draft to accepted specification.

Update 2015-02-18: the HTTP/2.0 specification has been approved and will soon be assigned an RFC number, and be published. HTTP/2.0 is, as they say, done!

HTTP2 is not a complete rewrite. The protocols in use at the moment work well and are understood by millions of devices. And due to the complexity of rolling out a new internet protocol, staged and staggered approaches are almost always taken. HTTP2 will be an upgrade rather than a redo.

The main improvements being packaged into HTTP2 relate to speed. Webpages have only grown heavier and more complex over time, and our desire to access them from different types of devices and different types of locations has only increased – often leapfrogging the infrastructure necessary to provide broadband speed in all use cases.

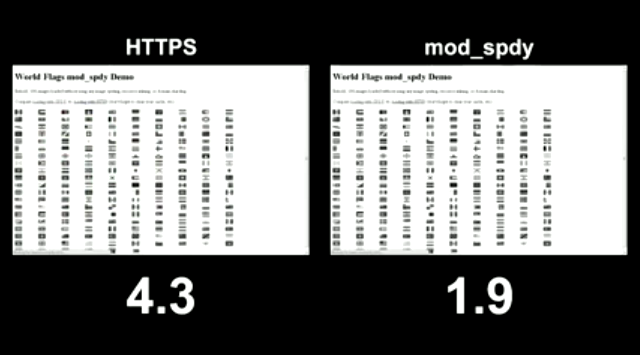

To see the kind of speed increase that is possible, here is a YouTube video which demonstrates SPDY, the protocol that HTTP2 is based on.

Figure 1: SPDY vs HTTPS speed comparision, SPDY wins by 2.4 seconds

The Basics

The basic principles of HTTP are not going to change. If you already know your GET from your POST, your referrers from your cookies – then rest easy that the vast majority of this will be untouched. HTTP2 really focuses on how the data is transmitted “on the wire”.

HTTP2 addresses the slowest portion of loading a website – how the data is transferred from server to device. Faster transmission speed will result in faster loading websites, snappier interfaces and happier users. And in a world where optimizing site response time produces real financial impact, the speed that your website loads is a key business metric that can’t be ignored.

HTTP2 will be transparently upgradable – if your browser and the server it is connected to both support it, the connection will be upgraded to HTTP2. And your browser will remember the server’s support for HTTP2 for next time. From the user’s point of view, their browser will update seamlessly and they will suddenly begin enjoying faster connection speeds.

What Is Changing

The current implementation of HTTP is slow. Resources are downloaded one at a time, and the browser must first request an asset before it can be delivered. Plenty of clever hacks have been discovered over the years to wrangle slightly faster load times but none of these will compare to HTTP2’s changes.

HTTP2 implements multiplexing, meaning many requests can be made at once and resources can be delivered whenever they are ready, not necessarily in a particular order or even only after they’ve been requested. The new protocol is optimized to how websites have evolved since 1.1 became mainstream.

The new protocol is binary, whereas the old was text-based. Binary protocols can express more meaning in less space which gives HTTP2 a speed boost out of the gate. Version 2 is based on a previous initiative from Google called SPDY, a data transfer protocol that made tested sites up to 50% faster to deliver and load.

Strictly speaking your current website will work as it currently does following the change-over to HTTP2, but it could be optimized to take full advantage of the change. There are clear advantages to supporting HTTP2 but there are also clear advantages to being an early adopter, but we’ll get to this a little later.

New Thinking

Binary Debugging

Being a binary protocol means that debugging HTTP2 will be a little trickier than it’s predecessors. If you’ve ever examined HTTP traffic passing to or from a web server you’ll be familiar with captures like this:

GET / HTTP/1.1

Host: www.dotmobi.com

User-Agent: Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.7.7) Gecko/20050414 Firefox/1.0.3

Accept: text/xml,application/xml,application/xhtml+xml,text/html;q=0.9,text/plain;q=0.8,image/png,*/*;q=0.5

Accept-Language: en-us,en;q=0.5

Accept-Encoding: gzip,deflate

Accept-Charset: ISO-8859-1,utf-8;q=0.7,*;q=0.7

Keep-Alive: 300

Connection: keep-alive

If-Modified-Since: Mon, 09 May 2012 21:01:30 GMT

If-None-Match: "26f731-8287-427fcfaa"

And this:

HTTP/1.1 200 OK

Date: Fri, 13 May 2005 05:51:12 GMT

Server: Apache/1.3.x (Unix)

Last-Modified: Fri, 13 May 2013 05:25:02 GMT

ETag: "26f725-8286-42843a2e"

Accept-Ranges: bytes

Content-Length: 33414

Keep-Alive: timeout=15, max=100

Connection: Keep-Alive

Content-Type: text/html

Following the change-over, if you want to debug HTTP requests and responses by eye then it’ll be necessary to parse the binary protocol using an interpreter. Many of the traffic analysis tools (“sniffers”) are already addressing this. The popular Wireshark supports HTTP2 through plugins for instance. Eventually support will be standard.

Compression

Compression will be central to the new HTTP2 protocol. At present web servers must be configured to support data compression like gzip and deflate, but these can only be used if the browser tells the server it supports them. HTTP2 will make compression a requirement. This is a long overdue fix and highlights one of the driving concepts behind HTTP2 – forcing best practice is superior to simply supporting it. As we’ll see this tactic is used elsewhere.

As well as mandatory data compression, HTTP2 will include header compression. The request headers sent by the browser and the response headers sent by the server are up to 50% dead weight, long-hand human-readable instructions that get sent with each and every exchange.

Encryption

SSL encryption will likely be baked into HTTP2. If ratified, this would be a sea change, because as it currently works SSL has to be manually added to a website and it typically involves a real-world cost and system administration efforts to do so. Under the new standard any data we send to or receive from web servers will be safe from eavesdropping.

Up till now SSL has been meant to achieve two goals – (1) to secure data in transmission and (2) to identify the company or individual running a particular website.

Figure 2: Standard SSL certificate error screen

In practice consumers learnt to trust any SSL connection without digging into the fine print of who owned the certificate. And in doing so would only be alarmed to discover a security-sensitive website not using SSL or their browser displaying an error that the SSL certificate in use was not signed by a central signing authority.

This had the pronounced effort of restricted the wide-spread use of SSL. The designers of HTTP2 seem intent on avoiding the same mistake this time around.

Hacking away at the Hacks

In the past we cleverly leveraged HTML tricks, CSS ploys, and HTTP cheats to produce the fastest websites possible. You are no doubt familiar with many of these – domain sharding, image sprites, file concatenation and so on.

Many of these will no longer be useful or even recommended once HTTP2 becomes the norm. With multiplexed HTTP2 connections we will finally be in a situation where overhead is no longer the major concern. And we will be able to worry more about the content and less about the process of delivering it. A brave new world in web development.

That being said, some of the hacks may still be valid. Stashing static content on CDN’s is still probably advisable. Reducing DNS lookups is still wise. Putting CSS includes at the top on the page and JS includes at the bottom are both still worth doing.

Image sprites will no longer be necessary as multiple image files will be delivered in one data stream, the previous overhead of opening and closing connections for each image file will be no more. Minifying page code will become counter-intuitive as the protocol itself will support compression.

The point being that we can start to rethink our approach to hacking performance. Many of our old ways will need to die, some will live on and no doubt time will produce some new creative ways to shave valuable loading time off of webpages.

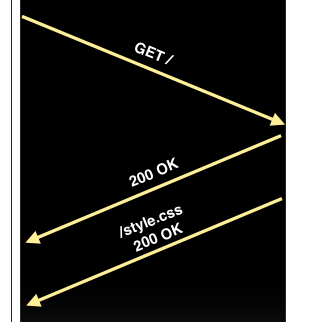

Server push will be a key factor in optimizing websites with HTTP2. When a browser issues a “GET /” request for your website’s homepage, your server will send back the HTML data and also include the style sheet and images. In effect, sending the browser what it would otherwise end up asking for before it asks.

Figure 3: Server push concept, browser requests index page and also gets back CSS file

Timeline

So what sort of timeline is the HTTP2 changeover operating on? As I said the working group plan to submit a proposed standard in November 2014. But things will likely begin to change before then. Here are the key facts to support that view:

- Firefox already leads the pack by supporting an early version of the standard. This support is disabled by default but it shows the eagerness that the web community has for a protocol upgrade.

- The SPDY protocol that predates HTTP2 is already supported by all the major browsers to varying degrees. This lends credence to the idea that HTTP2 will also be adopted early and widely.

- In many cases the updates required to support HTTP2 will be software only. Excepting the occasional caching proxy or edge device, the underlying hardware will not be an obstacle to HTTP2. And software updates are cheap and well established.

Some nay-sayers have pointed to the still not complete IPv6 changeover as evidence that HTTP2 will take a long time to become fully adopted. But while the IPv6 changeover has been dragging on much longer than it should have, that change is required for technical reasons – it doesn’t bring any commercial or usability benefit. The end user is not aware of what IP version they are used, and frankly there is no obvious advantage to the user either way. HTTP2 however will drive up speed and in the process bring a real business advantage. When every extra second results in more user drop-off, can you afford not to upgrade? Faster websites make for happier users who spend more. For this reason HTTP2 is likely to overtake the IPv6 changeover very quickly.

Getting Out In Front

You can start to take steps now to get ready for HTTP2. Start to review your web development hacks, consider how they might be affected by the new protocol. What can go and what should stay? Start to think about how your web development process will change once server push becomes a reality. How will you package web assets then – for instance, would page specific CSS or JS assets suddenly make more sense that a single site-wide approach?

Take advantage of the fact that search engines use website speed as a key factor in deciding site rankings. By adopting HTTP2 early you have the opportunity to surpass your competitors by taking advantage of the SEO boost of having the fastest website on the block.

You can already start the changeover. HTTP2 isn’t yet supported at the browser level but the interim SPDY protocol is. Adopt SPDY now by using Apache’s mod_spdy plugin or by taking advantage of Nginx’s SPDY module or even node_spdy in NodeJS.

Leave a Reply