Today’s devices pack in a vast array of sensors that gather data about the device and the world around it. For web applications, access to these sensors has grown over time through the addition to the browser of various sensor APIs such as the Geolocation API, and the DeviceOrientation Events API.

Such APIs have been instrumental in evolving the web platform to feature-parity with native apps, while at the same time still offering all the advantages the web has over native.

In this article we take a look at a new API for device sensors: the Generic Sensor API.

Why do we need a new sensor API?

Since we already have APIs to access sensor data in the browser, you might be wondering why we need a new API.

The existing sensor APIs have little in common, especially considering that, broadly, they offer similar types of functionality: reading sensor data, and doing something with that data.

The Generic Sensor API aims to address this by providing a set of consistent APIs to access sensors. The goals of the API are outlined in its spec (recommendation status):

The goal of the Generic Sensor API is to promote consistency across sensor APIs, enable advanced use cases thanks to performant low-level APIs, and increase the pace at which new sensors can be exposed to the Web by simplifying the specification and implementation processes.

The Generic Sensor API achieves this by defining a set of interfaces that expose sensors, consisting of the base Sensor interface, and concrete sensor classes that extend this, making it easy to add new sensors, and providing a consistent way to use them.

Fusion sensors

An interesting aspect of the API is Fusion sensors. You can think of these as virtual sensors that combine or fuse the data of multiple real sensors into a new sensor that is reliable and robust, or that has filtered and combined the data in such as way that is appropriate for a certain goal.

We’ll see the benefit of this later when we first build a simple compass app using the Magnetometer sensor directly, and then see how we can improve this based on the AbsoluteOrientationSensor, a fusion sensor which combines data from real magnetometer, accelerometer, and gyroscope sensors.

Sensors supported by the Generic Sensor API

The API has specifications for a number of sensors, such as Accelerometer, Ambient Light Sensor, Magnetometer, Gyroscope, OrientationSensor as well as drafts for future sensors, such as Geolocation Sensor and Proximity Sensor.

Chrome is leading the way with respect to implementation. The following motion sensors are enabled by default since Chrome 67:

AccelerometerGyroscopeLinearAccelerationSensorAbsoluteOrientationSensorRelativeOrientationSensor

And the following environmental sensors are not enabled by default, but can be enabled in Chrome flag settings (visit chrome://flags and enable Generic Sensor Extra Classes)

AmbientLightSensorMagnetometer

Edge and Firefox have partial implementations of the AmbientLightSensor, and Firefox has an open issue to implement the Generic Sensor API, but there is no ETA on this yet.

Support for individual sensor implementations are listed separately on caniuse.com.

W3C invites implementations of the Sensor APIs here.

How to use the Generic Sensor API

Let’s run through a couple of examples. Before we begin, note that this API can only be used on secure (HTTPS) contexts.

The general pattern of use is:

- Create sensor

- Define callback function

- Start sensor

- Stop sensor

1. Create sensor

We create a sensor like this:

sensor = new <SensorName>();

replacing

sensor = new Accelerometer();

or

sensor = new AbsoluteOrientationSensor();

You get the idea. We could do the same for any of the sensors listed earlier.

Checking for sensor support

Don’t forget to check for sensor support. There are a couple of ways to check for support for a sensor. First, you can check for support with something like this:

if(AmbientLightSensor in window) {

// Create Sensor

}

Alternatively, you can wrap your code within a try-catch block:

try {

// Create Sensor

} catch(error) {

console.log('Error creating sensor')

//Fallback, do something else etc.

}

Setting sampling frequency

We can also set the data sampling frequency, in Hz:

//Read once per second

sensor = new AmbientLightSensor({frequency: 1});

Note that, for security reasons, the browser implementation of any particular sensor may impose limits on various sensor properties. For example, if you try to set the frequency of the AmbientLightSensor in Chrome to a value greater than 10, you will see a console message:

Maximum allowed frequency value for this sensor type is 10 Hz.

What kind of security concerns might there be around the ambient light sensor? Reflected ambient light levels from a device’s screen can be exploited to determine pixel values of the screen. Limiting the sampling frequency can mitigate threats like this.

2. Define callback function

Defining a sensor callback is straightforward:

sensor.addEventListener('reading', listener);

function listener() {

// Do something with the sensor data

}

3. Start the sensor

This needs no explanation:

//Start the sensor sensor.start()

4. Stop the sensor

When you’re done with the sensor, you can stop using it with:

//Stop the sensor sensor.stop()

This pattern will be repeated for all the sensors we use in the following sections.

Gyroscope

The Gyroscope sensor measures angular velocity around device’s local X, Y, Z axes, in radians per second. The sensor’s readings are stored in the x, y, z properties, so we can output the data to the browser with the following code:

<div id='status'></div>

<script>

if ( 'Gyroscope' in window ) {

let sensor = new Gyroscope();

sensor.addEventListener('reading', function(e) {

document.getElementById('status').innerHTML = 'x: ' + e.target.x + ' y: ' + e.target.y + ' z: ' + e.target.z;

});

sensor.start();

}

</script>

Accelerometer

There are actually three sensors defined in the Accelerometer spec: Accelerometer, LinearAccelerationSensor, and GravitySensor. Each of them provides some information about the accelerations applied to the device’s X, Y, and Z axes.

Accelerometer Sensor

The accelerometer provides acceleration information on the device’s X, Y, Z axes. Similar to the Gyroscope, we can output this data to the browser with:

let sensor = new Accelerometer();

sensor.addEventListener('reading', function(e) {

document.getElementById('status').innerHTML = 'x: ' + e.target.x + ' y: ' + e.target.y + ' z: ' + e.target.z;

console.log(e.target);

});

sensor.start();

LinearAcceleration Sensor

This is a subclass of Accelerometer, and measures acceleration applied to the device excluding the acceleration contributed by gravity. It uses x, y, and z properties as above.

Gravity Sensor

Another subclass of Accelerometer. This time the data represents acceleration due to the effect of gravity on each of the devices axes. (Note that this sensor is not currently available in Chrome).

Ambient Light Sensor

Next, let’s look at the Ambient Light Sensor. This sensor reports the environmental light level as detected by the device’s light sensor. Use cases could include accessibility, smart lighting, and camera settings based on ambient light.

Note that at the time of writing, environmental sensors (the Ambient Light Sensor, and Magnetometer) must be manually enabled in Chrome browser by visiting chrome://flags and enabling the Generic Sensor Extra Classes option, so do this first before trying to run any demo code.

The Ambient light sensor reports the detected light level in lux units, between 0 and around 30000 (the upper limit appears to be device-dependent: some devices tested maxed out at 32768, while others at 30000).

First we create the sensor:

sensor = new AmbientLightSensor();

Next let’s consider the callback function. To read the value from this sensor, we check the illuminance property. So we could output the raw data to the browser with:

<div id='status'></div>

<script>

sensor.addEventListener('reading', function(e) {

document.getElementById('status').innerHTML = e.target.illuminance;

}

</script>

Now let’s do something else with the data, apart from just outputting the raw values to the browser.

A use-case for the ambient light sensor could be to automatically adjust screen brightness based on the ambient light. When the environment is bright, we crank up the screen brightness; when it’s dark, then we crank it down. However, the web browser can’t control the actual raw screen levels. One thing we can do however is adjust the colours on the page to show darker or lighter colours based on the ambient light readings, so we could have a dark theme and a light theme.

The example below shows how to set the background colour of the page based on the ambient light level. We just map the lux value to a value in range 0 to 255, and then set the background colour of the page body to the corresponding grey level:

sensor.addEventListener('reading', function(e) {

document.getElementById('status').innerHTML = e.target.illuminance;

console.log(e.target.illuminance);

var lux = e.target.illuminance;

console.log('L:', lux.map(0,500,0,255));

var val = lux.map(0,500,0,255);

document.body.style.backgroundColor = 'rgb('+val+','+val+','+val+')';

});

An alternative example, and perhaps more useful, could be to toggle a CSS class, light, or dark whenever the light level crosses some threshold.

On the Intel github pages there is a pretty nice map demonstration that takes this idea a bit further: when the ambient light level drops below a threshold a Google Map is switched to a dark theme. https://intel.github.io/generic-sensor-demos/ambient-map/build/bundled/

Magnetometer

Next let’s take a look at the Magnetometer sensor. As it’s name suggests, it measures magnetic fields, in three axes (x, y, z) around the device. We can get the raw data by querying the x, y, and z properties like this:

var sensor = new Magnetometer();

sensor.addEventListener('reading', function(e) {

document.getElementById('status').innerHTML = 'x: ' + e.target.x + ' y: ' + e.target.y + ' z: ' + e.target.z;

});

sensor.start();

With a little bit of trigonometry on the x and y axes (courtesy of the MagnetomerSensor spec), we can compute a compass heading, and display that instead:

var sensor = new Magnetometer();

sensor.addEventListener('reading', function(e) {

let heading = Math.atan2(e.target.y, e.target.x) * (180 / Math.PI);

document.getElementById('status').innerHTML = 'Heading in degrees: ' + heading;

});

sensor.start();

(Multiplying by 180/Math.PI converts from radians to degrees)

Based on the heading, a simple compass image or a map could be rotated to that heading (we did something similar to this using the DeviceOrientation Events API previously):

sensor.addEventListener('reading', function(e) {

let heading = Math.atan2(e.target.y, e.target.x) * (180 / Math.PI);

//Adjust heading

heading = heading-180;

if(heading < 0) heading = 360 + heading;

document.getElementById('status').innerHTML = 'Heading in degrees: ' + heading;

compass.style.Transform = 'rotate(-' + heading + 'deg)';

});

sensor.start();

There are limitations to this approach though, as outlined in the Magnetometer interface description. The main problem is that the device needs to be level with the Earth’s surface, or else tilt compensation should be applied. We can improve on this using the Orientation sensor, which is a fusion sensor combining accelerometer, magnetometer, and gyroscope data.

Fusion sensors: combining real sensors

We briefly mentioned fusion sensors earlier, and how they are a benefit of the Generic Sensor API. By combining data from multiple real sensors, new virtual sensors can be implemented that combine and filter the data so that it’s easier to use, or so that it can be more useful in a particular problem domain.

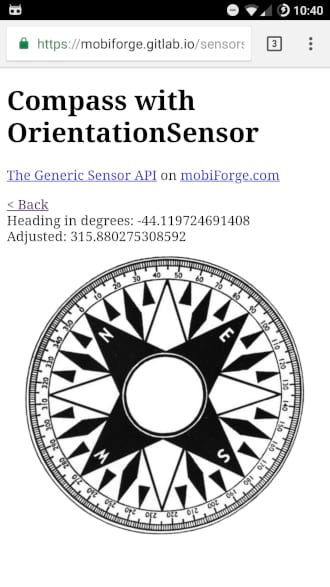

We can see this by improving on our initial Magnetometer compass example with the OrientationSensor.

The OrientationSensor

The OrientationSensor has two subclasses:

AbsoluteOrientationSensor: reports rotation of device in world coordinates, based on real accelerometer, gyroscope, and magnetometer sensorsRelativeOrientationSensor: reports rotation of device relative to a stationary coordinate system, based on real accelerometer and gyroscope sensors

Screen coordinates syncing

A nice feature of the OrientationSensor is the automatic mapping of sensor readings to screen coordinates, taking screen orientation into account. Previously this mapping would have to be implemented in the application logic, requiring the developer to write JavaScript code to watch out for orientation change events.

The referenceFrame parameter is used to specify whether readings should be relative to the device or the screen orientation:

// This is the default (i.e. referenceFrame could be omitted here)

let sensor = new RelativeOrientationSensor({referenceFrame: "device"});

// Use screen coordinate system

let sensor = new RelativeOrientationSensor({referenceFrame: "screen"});

Using the OrientationSensor

Using the these sensors follows the same Generic Sensor API pattern as before:

let sensor = new AbsoluteOrientationSensor({frequency: 60});

...

sensor.start();

let sensor = new RelativeOrientationSensor({frequency: 60});

...

sensor.start();

We can get the quaternion representing the device motion by reading the quaternion property of the sensor event:

e.target.quaternion

Quaternion sensor data

Each rotation data event from these sensors is returned as a quaternion [x,y,z,w] that represents a rotation in 3D space relative to the coordinate system. The components representing the vector part of the quaternion go first, and the scalar component comes after. Returning data in this format makes more compatible with graphics environments such as WebGL.

A real benefit of using quaternion data can be seen through 3D examples with WebGL, or third party 3D libraries such as Three.js. We won’t go into these here, but there are several excellent examples on Intel’s Generic Sensor API Demos github project that demonstrate some of the possibilities, including 360 degree panorama and VR demos. I recommend checking these out.

We’ll update our previous compass example to make use of the OrientationSensor. Since we’re concerned with the orientation of the device with respect to the real world, this sounds like a job for AbsoluteOrientationSensor.

A compass with AbsoluteOrientationSensor

We can start by modifying our previous MagnetometerSensor compass example to retrieve the quaternion property, instead of the magnetometer axial rotation values:

let compass = document.getElementById('compass');

let sensor = new AbsoluteOrientationSensor();

sensor.addEventListener('reading', function(e) {

var q = e.target.quaternion;

...

}

This time to compute the heading we need to calculate the Euler angle for the x axis. After a quick quaternion-to-Euler-angles crash course on Wikipedia, and a bit of experimentation to figure out which sensor properties represented the real world axes we are interested in, we can separate out the compass rotation from the rotations represented in the quaternion with the following:

Math.atan2(2*q[0]*q[1] + 2*q[2]*q[3], 1 - 2*q[1]*q[1] - 2*q[2]*q[2])

As before, we’ll multiply by 180/Math.PI to convert from radians to degrees, and now we have a heading.

The rest of the example code is the same as before.

let compass = document.getElementById('compass');

let sensor = new AbsoluteOrientationSensor();

sensor.addEventListener('reading', function(e) {

var q = e.target.quaternion;

heading = Math.atan2(2*q[0]*q[1] + 2*q[2]*q[3], 1 - 2*q[1]*q[1] - 2*q[2]*q[2])*(180/Math.PI);

if(heading < 0) heading = 360+heading;

compass.style.Transform = 'rotate(' + heading + 'deg)';

}

sensor.start();

The advantage of the fusion sensor can be seen in this example: the compass is more stable, and not affected by tilting.

(Note, this has only been tested on a couple of Android devices, so your mileage may vary)

Geolocation Sensor

There is a request for implementation of a Geolocation Sensor for the Generic Sensor API, but at time of writing no such sensor has been implemented in any of the main browsers. Notably, there has been a discussion on the merits of high-level vs low-level access to underlying sensors.

Geolocation Sensor Issues tracker: https://github.com/wicg/geolocation-sensor/issues

Privacy and permissions

The Generic Sensor API is integrated with the Permissions API. However, the spec states that access can be granted without prompting the user, and it depends on the browser and the sensor in question.

While access to accelerometer and gyro sensors might not seem as important to require permission for, as, say a Geolocation sensor, there can be unforeseen and undesirable uses of seemingly innocuous sensors. Felix Krause, a developer at Google, demonstrated a proof of concept of how a user’s activity can be determined based on the data coming from acceleration and gyro sensors, using the older APIs.

Some of the activities that can be determined are:

- sitting

- standing

- walking

- running

- driving

- taking a picture

- lying in bed

- laying the phone on a table

According to Felix, it would likely be possible to determine if a user was impaired due to alcohol too!

The Generic Sensor API team were quick to point out that security and privacy were central considerations in the API, intimating that this should not be possible with the Generic Sensor API.

Within 2 hours @kennethrohde and @darktears, who both work on Chromium & W3C specs, send me links to where this is being discussed ❤️ pic.twitter.com/cWfuvZeyTy

— Felix Krause (@KrauseFx) September 7, 2017

In the current Chrome implementation anyway, user permission was not required to access any of the sensors used in this article, so it looks like it might still be possible to reproduce this tracking behaviour in the new API. On the other hand, it’s worth noting that sensor readings will only be available for active and visible pages. This should reduce somewhat the tracking risks described above in some circumstances, for example, when the device is in a pocket, but not while the user is actively using the device.

A discussion about sensor permissions can be found here.

Generic Sensor API: a grand-unifying sensor API?

At the outset of this article, we mentioned that the goal of the API was to provide a consistent interface to all device sensors.

It’s early days still. Browser support is minimal. Apart from the AmbientLightSensor, Chrome is the only browser offering support right now.

Of the sensors supported in Chrome 67, the API delivers a good developer experience, delivering on its promise to provide a consistent API across different sensors.

A notable sensor absent right now is the Geolocation sensor. Although the HTML5 Geolocation API already provides location data (derived from GPS, A-GPS, Wifi data) to the browser, it seems like a likely candidate for the Generic Sensor API.

Geolocation is a key part of the modern mobile experience, covering everything from navigation to local search, and was the first sensor I thought of when I first heard about the Generic Sensor API. While work is underway, its specification is only at Editor’s draft status, and not an actual recommendation like the other sensors discussed in this article. The Generic Sensor API provides a nice, consistent interface to device sensors but needs to include Geolocation before it can be considered complete.

Examples and code

- Examples: https://mobiforge.gitlab.io/sensors/

- Example code: https://gitlab.com/mobiforge/sensors/

Links and specs

- Generic Sensor API https://www.w3.org/TR/generic-sensor/

- Gyroscope https://www.w3.org/TR/gyroscope/

- Magnetometer https://www.w3.org/TR/magnetometer

- Ambient Light Sensor https://www.w3.org/TR/ambient-light

- Accelerometer https://www.w3.org/TR/accelerometer

- Orientation sensor https://www.w3.org/TR/orientation-sensor

- Geolocation sensor https://wicg.github.io/geolocation-sensor/

- Proximity sensor https://www.w3.org/TR/proximity/

- Sensors for the web https://developers.google.com/web/updates/2017/09/sensors-for-the-web

Main image:Robert Penaloza / Unsplash

Leave a Reply